AI tools are now writing R&D claim narratives, building development code and, in some cases, doing both. HMRC's compliance framework has adapted. Companies and their advisors that do not understand the distinction between genuine R&D and AI-assisted activity risk claims that will not survive scrutiny. This article sets out what has changed, why it matters, and what good practice now looks like.

Table of Contents

- What does "AI-prepared" mean in the context of R&D claims?

- Why does the Additional Information Form make AI-generated narratives so risky?

- What does HMRC's competent professional test actually require?

- Has AI development changed the qualifying threshold for R&D itself?

- Where does genuine R&D still exist in an AI-enabled development environment?

- What should companies do now?

- Frequently asked questions

What does "AI-prepared" mean in the context of R&D claims?

"AI-prepared" covers two distinct phenomena, and it is important not to conflate them.

The first is using AI tools such as ChatGPT or similar large language models to draft the technical narrative or project descriptions that accompany an R&D tax credit claim. This is widespread. A CFO or claim preparer feeds a few bullet points about a software development project into an AI tool and receives a polished, technically plausible-sounding narrative in return. The narrative may be grammatically impeccable and entirely generic. It will typically fail to identify the specific technological uncertainty, the competent professional who made that identification, or why the solution was not readily deducible from existing knowledge.

The second is using AI coding tools, such as GitHub Copilot, Cursor, or similar products, as part of the actual development work being claimed. HMRC data for 2023-24 shows 46,950 R&D claims in total, a 26% decline from the year before. The software sector remains one of the largest claim categories. According to the Stack Overflow 2025 Developer Survey, 84% of professional developers now use or plan to use AI coding tools, with more than half using them daily. GitHub reports that Copilot now generates 46% of code written by active users. For claims built around software development, this matters directly.

These two phenomena present different risks, but both demand careful thought before any claim is submitted.

Why does the Additional Information Form make AI-generated narratives so risky?

From 8 August 2023, all R&D claims must be accompanied by a mandatory Additional Information Form (AIF). Without the AIF, any claim in a Corporation Tax return is invalid. The AIF is not a summary form. It requires project-level detail: the specific scientific or technological advance sought, the uncertainty being resolved, why that uncertainty was not readily deducible by a competent professional and how the project sought to overcome it.

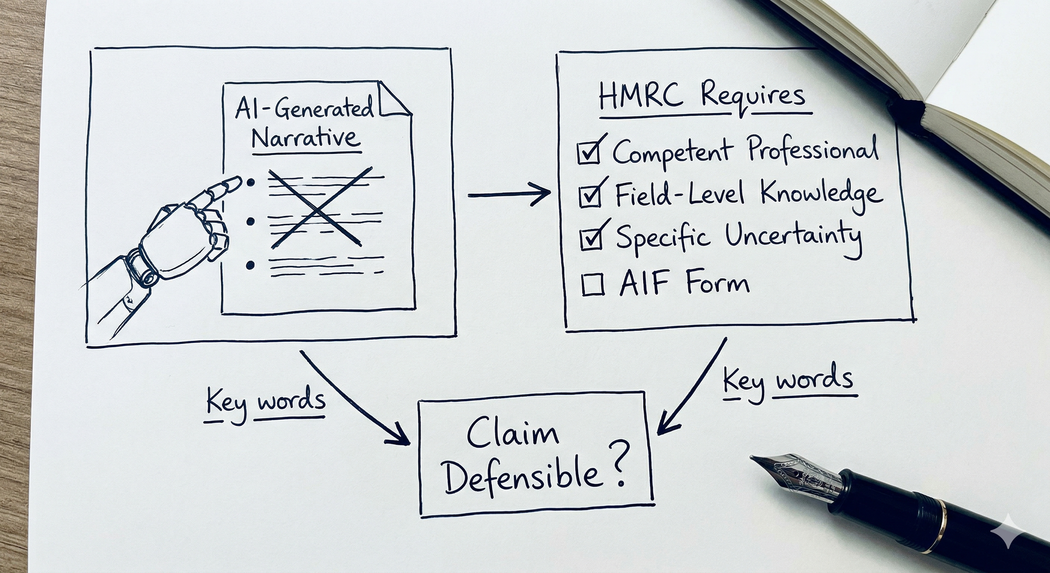

AI-generated narratives consistently struggle with this requirement. The problem is not that the narrative reads badly. The problem is that it reads generically. It describes a category of activity rather than a specific project. It references "novel algorithms" and "innovative architectures" without identifying the actual technical constraint or the field-level knowledge gap the project was trying to resolve.

HMRC's Guidelines for Compliance (GfC3, updated January 2025) are explicit: the opinion of a competent professional must set out the current state of relevant knowledge in the field and explain why what is being sought represents an advance relative to that baseline. This is a factual assessment anchored in a specific person's expertise at a specific point in time. It cannot be generated from a prompt. Any adviser offering to streamline an R&D claim using AI narration tools is creating a document that may be technically inadmissible and will almost certainly not survive a compliance check.

Enquiries are opened precisely because the technical narrative across multiple projects reads as if produced from a common template. HMRC's case managers are experienced readers. They notice patterns.

What does HMRC's competent professional test actually require?

The competent professional requirement is the backbone of the UK R&D framework. Under the DSIT Guidelines (issued by statutory instrument under s.1006 Income Tax Act 2007 and applying to accounting periods beginning on or after 1 April 2023), a project must seek an advance in overall scientific or technological knowledge or capability. Whether that advance is genuine depends on whether the solution was readily deducible by a competent professional working in the field.

HMRC GfC3 sets out exactly what the opinion of a competent professional must contain.

- The depth of the professional's knowledge and experience in the relevant field.

- The current state of knowledge in the field at the time the project began.

- What the advance being sought is.

- Why it represents an advance relative to field-level knowledge.

- How the advance relates to knowledge, capability, or both.

HMRC has reduced claims to zero where a competent professional has not been identified. They have also, as the GfC3 notes explicitly, taken the view that "having worked in a field or having an intelligent interest alone" does not constitute competence. The professional must be suitably qualified or experienced in the specific field of science or technology relevant to the advance being sought.

An AI tool produces no opinion. It generates text. Those are categorically different things, and any claim that relies on AI-generated text to substitute for a genuine professional assessment will fail this test.

Has AI development changed the qualifying threshold for R&D itself?

This is a more nuanced question, and it deserves a direct answer.

The statutory test has not changed. What has changed is the practical baseline against which technological uncertainty is assessed. If a competent professional now routinely works with AI coding tools that can synthesise approaches from millions of repositories instantaneously, then problems that once required genuine investigative effort may no longer constitute scientific or technological uncertainty under the DSIT Guidelines. If the solution is something an AI tool could generate from a plain-language description in an afternoon, it is difficult to argue that the solution was not readily deducible.

HMRC's own September 2025 R&D statistics reflect this pressure. SME claims fell by 31% in 2023-24. First-time claimants have dropped from a peak of 19,720 to approximately 3,765. Part of that decline reflects the compliance campaign and the deterrent effect of the Mandatory Random Enquiry Programme. Part of it reflects a genuine contraction in qualifying activity as AI tools absorb the kinds of implementation challenges that previously attracted relief.

This does not mean software R&D has disappeared. It means the centre of gravity is shifting. Claims built around integrating known APIs, optimising known database architectures, or automating known business workflows are under increasing pressure. Claims built around genuinely novel model architectures, frontier applications of machine learning to previously unsolved problems, or development work in regulated and security-constrained environments where AI tooling is prohibited are likely to remain well within scope.

The PAYE cap also creates a structural issue for AI-augmented teams that advisors should flag proactively. Under both the merged scheme and Enhanced R&D Intensive Support, payable credit is capped at £20,000 plus 300% of PAYE and NIC liabilities. A lean team of two or three senior engineers directing AI agents may have PAYE liabilities that do not reflect the genuine scale of the research activity being undertaken. Smaller businesses relying on cash credits should model this carefully before building a claim strategy on assumptions derived from a larger headcount.

Where does genuine R&D still exist in an AI-enabled development environment?

The answer is: in the places where AI cannot easily follow.

Government and defence contracts frequently mandate that code be human-written, explainable and auditable. AI-generated code is prohibited by procurement standards and security requirements in a meaningful proportion of regulated system development. Technological uncertainty in these environments is genuine, the constraints are structural, and the work involves navigating boundaries that AI tools cannot yet reach.

Frontier AI research itself qualifies by definition. Companies developing new model architectures, training new approaches for previously unsolved problems, or applying machine learning in genuinely novel ways are operating at the edge of scientific knowledge. These are not the companies concerned about whether their Agile sprints qualify.

Equally, AI is creating new categories of qualifying activity in sectors that were previously outside the R&D conversation. Logistics, agriculture, healthcare and legal technology companies building proprietary optimisation models or novel natural language applications may well be undertaking work that meets the DSIT threshold. Some of the opportunity that is disappearing in commodity SaaS is re-emerging in applied science and complex domain-specific development.

The key question in every case remains the same: was there genuine scientific or technological uncertainty that a competent professional could not readily resolve? If the answer is yes, and the evidence supports it, the claim is defensible. If the answer is "probably, and the AI helped us describe it," the claim is not.

Key takeaway: The test for R&D qualification has not changed. But AI tools have raised the baseline for what a competent professional can readily deduce, and that shift requires every claim to be assessed afresh rather than based on precedents from two or three years ago.

What should companies do now?

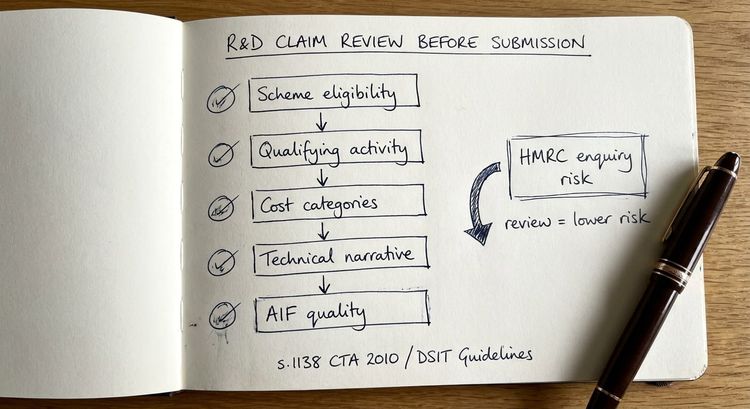

If your company is preparing an R&D claim that touches software development, and particularly if AI coding tools form part of your development workflow, there are three things to address before submission.

- Ensure the technical narrative is written by or under the direct supervision of a genuine competent professional. That person must be able to articulate the state of field-level knowledge at the time the project began and explain why the uncertainty could not be resolved without investigative work. No AI tool can substitute for this assessment. If your current preparer cannot identify who the competent professional is, the claim requires review before it goes anywhere near HMRC.

- Document AI tool usage honestly. Where AI coding tools assisted with development, be clear about the nature of that assistance. The question is whether the architectural and engineering decisions involved genuine uncertainty, not whether any code was AI-generated. The evidence standard is shifting: in an AI-assisted environment, documentation must capture the cognitive effort behind architectural choices, the testing and validation of AI-generated outputs, and the specific points where established approaches proved inadequate.

- Consider whether a claim that passed scrutiny in 2022 or 2023 will pass the same scrutiny today. The baseline has moved. Claims that were reasonable two years ago may need reassessment against the current state of AI tooling in the relevant field.

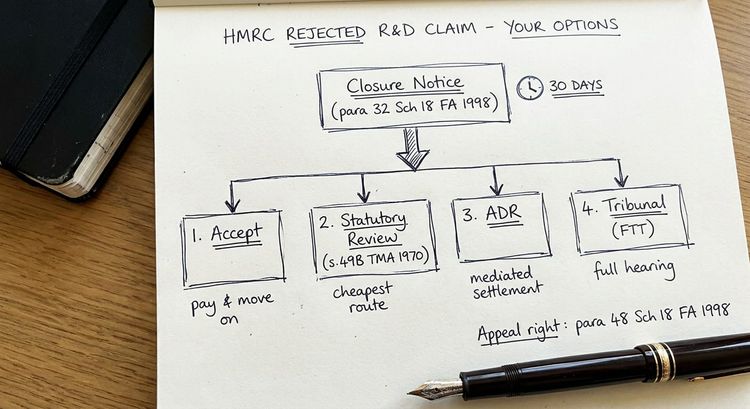

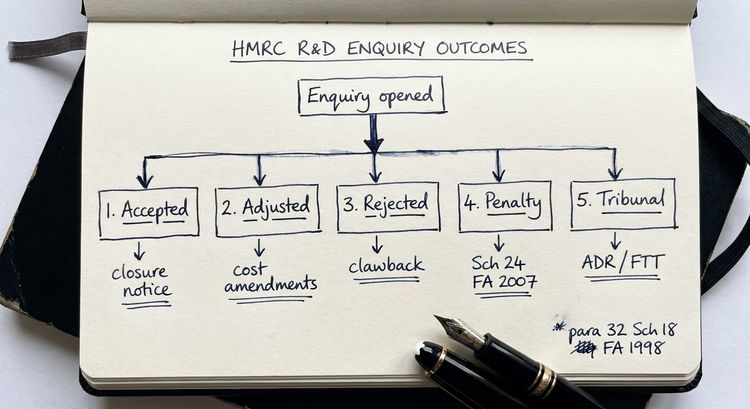

If your claim has already attracted an enquiry or you are concerned about one, the first priority is to understand exactly what HMRC has questioned. My article on how to respond to an HMRC R&D enquiry letter covers the procedural steps in detail.

For cases involving complex software claims, weak technical narratives, or AI development environments, specialist input at the earliest stage is almost always more effective than attempting to reconstruct defensible evidence after an enquiry has opened.

Frequently asked questions

Can I use AI to help write my R&D tax credit claim?

AI tools can assist with research, drafting, and structuring your thinking, but they cannot replace the substantive professional assessment that HMRC requires. The competent professional opinion must be genuine. It must reflect the specific state of field-level knowledge at a particular point in time and identify the specific uncertainty the project resolved. An AI tool produces plausible text. HMRC requires an evidenced professional judgment. These are not the same thing.

Will HMRC know if my technical narrative was AI-generated?

HMRC does not need to detect AI involvement formally to open an enquiry. Claim compliance officers assess technical narratives for specificity, project-level detail and internal consistency. Narratives that read as generic, that fail to identify the specific uncertainty or the competent professional, or that describe a category of activity rather than a real project attract scrutiny regardless of how they were produced. The AIF requirement, mandatory since August 2023, makes this gap particularly visible.

Has AI development work automatically changed what qualifies as R&D?

Not automatically, but the qualifying threshold is under pressure. The DSIT Guidelines define uncertainty as something not readily deducible by a competent professional working in the field. If that professional now has access to AI tools that can surface solutions previously requiring weeks of research, some activities that qualified in 2021 or 2022 may not qualify today. Each claim must be assessed against the current state of technology in the field, not against historical precedent.

My development team uses GitHub Copilot extensively. Can we still claim R&D?

Potentially yes, but the analysis requires care. The question is not whether AI tools were used, but whether the work involved genuine scientific or technological uncertainty that the tools could not resolve. Novel architectural decisions, frontier model development, development in constrained regulatory environments and work involving genuinely new computational approaches may still qualify. Routine implementation of known patterns, even if the code was written without AI tools, does not qualify. The tool usage is a factor in the assessment, not a disqualifier in itself.

What does the merged R&D scheme mean for AI-assisted development claims?

The merged scheme, which applies from accounting periods beginning on or after 1 April 2024, consolidates the previous SME and RDEC frameworks. The qualifying test remains the DSIT Guidelines: an advance in science or technology through the resolution of genuine uncertainty. The merged scheme also preserves the PAYE cap on payable credits. For lean, AI-augmented teams where PAYE liabilities do not reflect the scale of research activity, this cap is a significant modelling issue that advisors should address before submission.

When should I instruct a specialist for a software R&D claim?

Sooner than most companies do. The Additional Information Form is mandatory and must be submitted alongside the claim. Errors or omissions on that form cannot easily be corrected once HMRC has noticed them. If your development workflow involves AI tools, if your competent professional is not clearly identified, or if your claim covers more than one accounting period under different scheme rules, specialist input before submission is almost always more cost-effective than managing an enquiry afterwards. My R&D tax credit defence service covers both pre-submission reviews and active enquiry defence.

Is HMRC specifically targeting AI-prepared claims?

HMRC's compliance framework is risk-based. The Mandatory Random Enquiry Programme selects a sample of claims regardless of content. Beyond that, claims are assessed against risk indicators that include the quality of the technical narrative, the plausibility of the cost base and consistency across the additional information form. AI-generated narratives are not automatically flagged, but they frequently exhibit the generic, unspecific characteristics that place claims in higher risk categories. Whether HMRC frames its investigation as targeting AI-prepared claims matters less than the outcome for the company: an enquiry that could have been avoided with better evidence.

Next steps

If you are concerned about a claim you have submitted, or you are preparing one that involves AI development tools or AI-assisted narration, I am happy to carry out a review. The earlier a specialist looks at the evidence, the more options you have.

Contact me at stevelivingston@iptaxsolutions.co.uk or call 0161 961 0096.

Steve Livingston FCA is the founder of IP Tax Solutions, a specialist innovation tax advisory firm. Former KPMG, former partner at Crowe. For a detailed guide to responding if HMRC has already made contact, see my article on how to respond to an HMRC R&D tax credit enquiry letter.